Every organisation now has an AI strategy. Very few have asked the harder question: who owns the intelligence it produces?

For the past two years, the default enterprise approach has been straightforward. Pick a large language model. Connect your data. Ask it questions. The model provides the reasoning, you provide the documents, and somewhere in between, decisions get made.

But this architecture has a structural flaw that is becoming harder to ignore.

When your reasoning layer is a generic model trained on the open web, your organisational context — the way you make decisions, your historical patterns, your hard-won institutional knowledge — gets flattened into an average. The model doesn’t know what matters to you. It knows what matters to everyone, which is a very different thing.

This is the sovereignty problem.

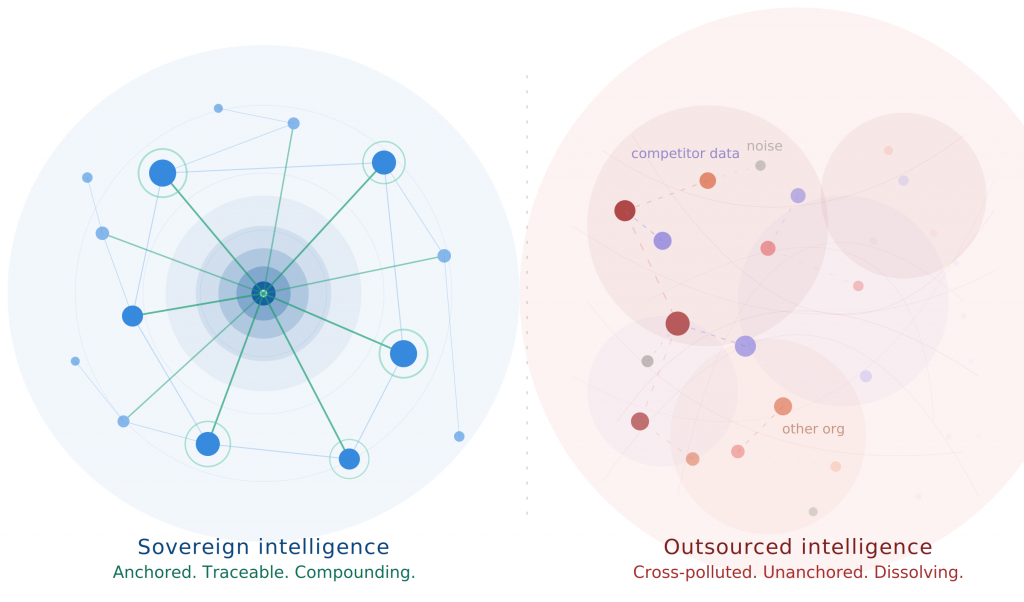

It isn’t about nationalism or data residency, though those matter too. It’s about something more fundamental: if your AI cannot distinguish between your organisation’s actual strategic priorities and a generic best practice pulled from the training corpus, then it isn’t really your intelligence. It’s a shared utility with your logo on it.

The implications compound quickly. Boards are increasingly expected to demonstrate that strategic decisions are traceable and auditable. Governance frameworks worldwide are tightening around AI accountability. But you cannot audit reasoning that lives inside a black box you don’t control. You cannot trace a conclusion back to its source if that source is a probabilistic blend of the entire internet.

And there is a competitive dimension. If every organisation in your sector is plugging into the same model, the same way, the only remaining differentiator is your own unstructured knowledge — the documents, conversations, and institutional memory that no one else has. Yet most of that knowledge sits unmapped, unconnected, and invisible to the very AI systems that are supposed to unlock it.

The organisations that will lead in the next phase are not the ones with the best model. Models are commoditising rapidly. The leaders will be the ones who have mapped, structured, and anchored their own knowledge so that any model — today’s or tomorrow’s — can reason with it faithfully.

Sovereign intelligence isn’t a product category. It’s an architectural decision. It means owning the orchestration layer between your knowledge and whatever AI you choose to use. It means every insight can be traced to a source your board can inspect. It means your institutional memory compounds over time rather than resetting with every new model release.

At MangoMoon, this is what we built Perceptor to do — not to replace the models, but to ensure that whatever model you use is anchored in your knowledge, governed by your standards, and accountable to your board.

The question is no longer “which AI should we use?”

The question is: who owns the map of what you know?